The Thingamabobas began with two weeks of R&D took place in September 2021 and was supported by Lakeside Arts, Nottingham, UK. Rachel and I worked together to develop ideas in the first week we produce a number of sketches with conceptual ideas for the sculptures. We researched various familiar and unfamiliar technologies such as machine learning and robotics.

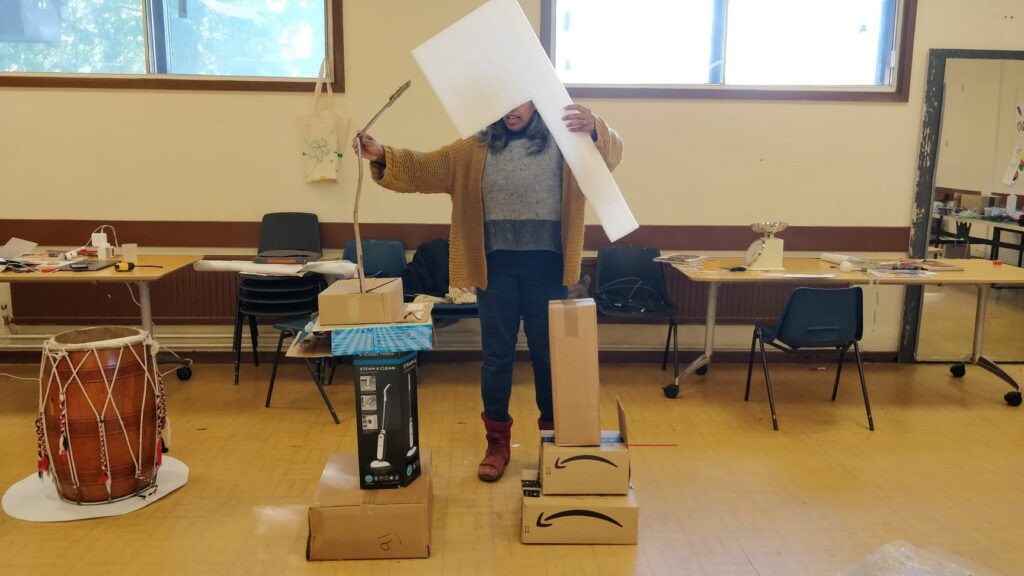

In week two we invited artists Michelle Reader, Liz Clark, and AI specialist Doratha Vinkemeier to further explore and build prototypes of the sculptures from cardboard boxes. This blog is visual evidence of our R&D.

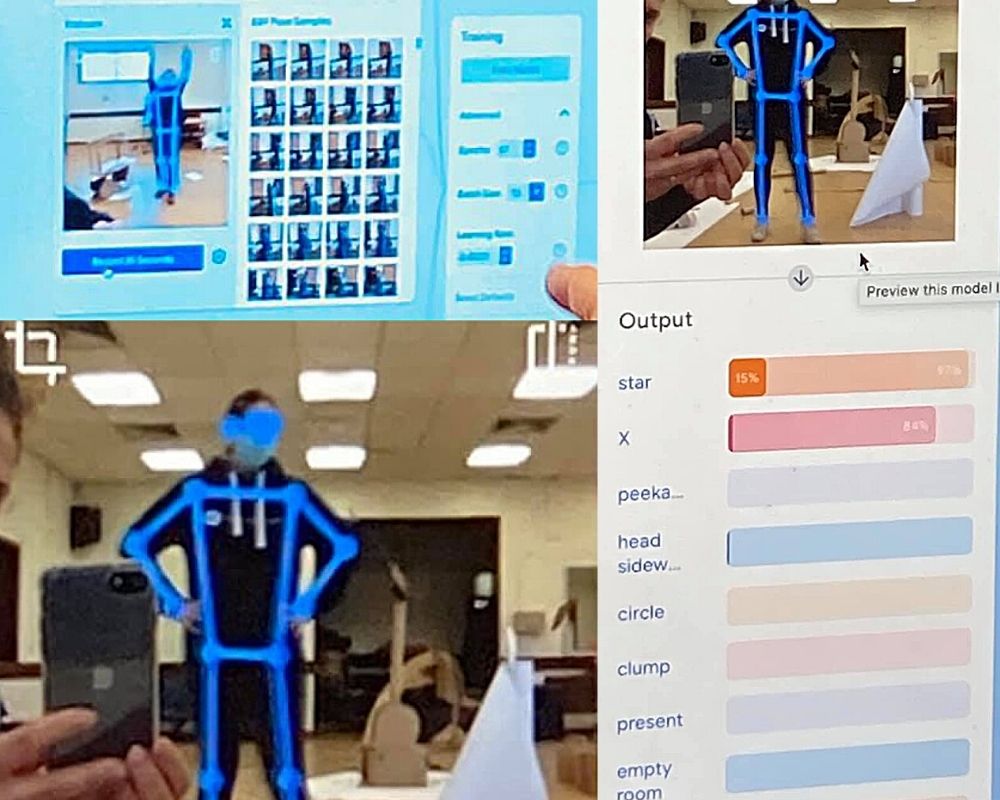

Exploring PoseNet for Full body recognition

Working with choreographer Liz and AI researcher Doratha we explored open-source software PoseNet, from Google Creative Lab. It’s a machine learning model which allows for ‘real-time human pose estimation in the browser using a webcam on a laptop.

We were looking at how accurate it is in picking up a moving person. We recorded a number of different movements patterns to understand what the camera can see.

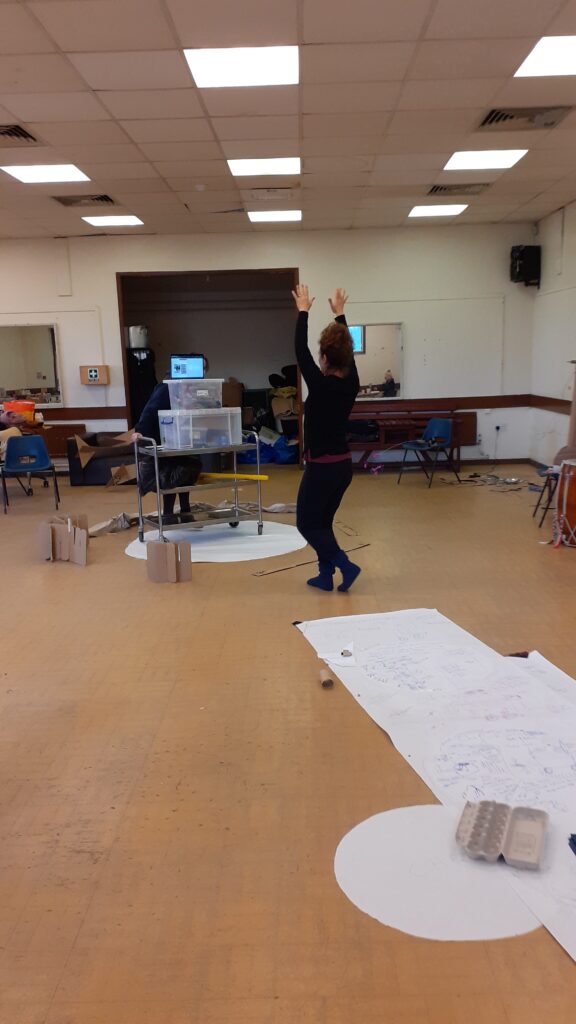

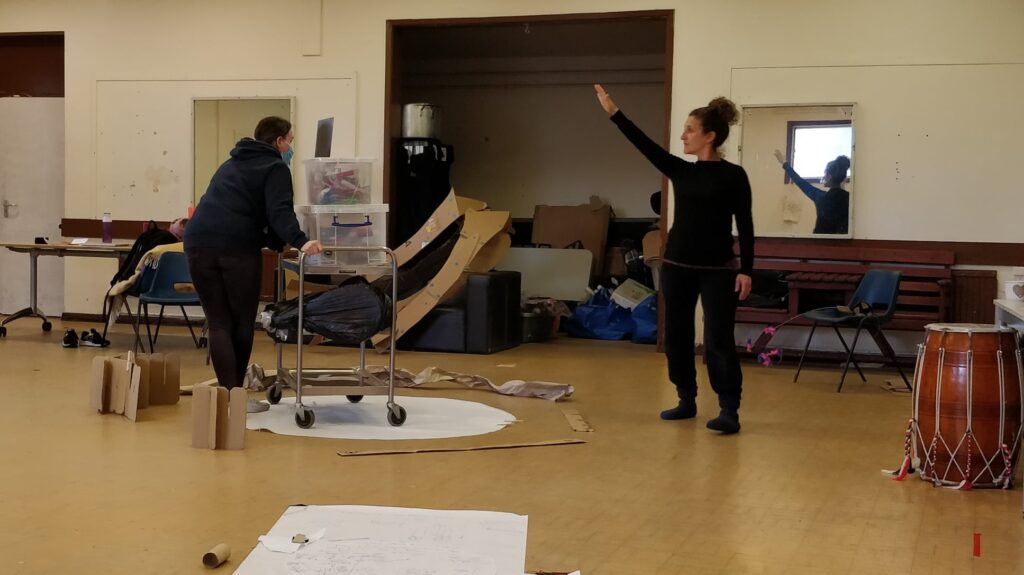

Capturing movements

A laptop is used to track Liz as she moves around the trolley and a trolley with a camera follows her.